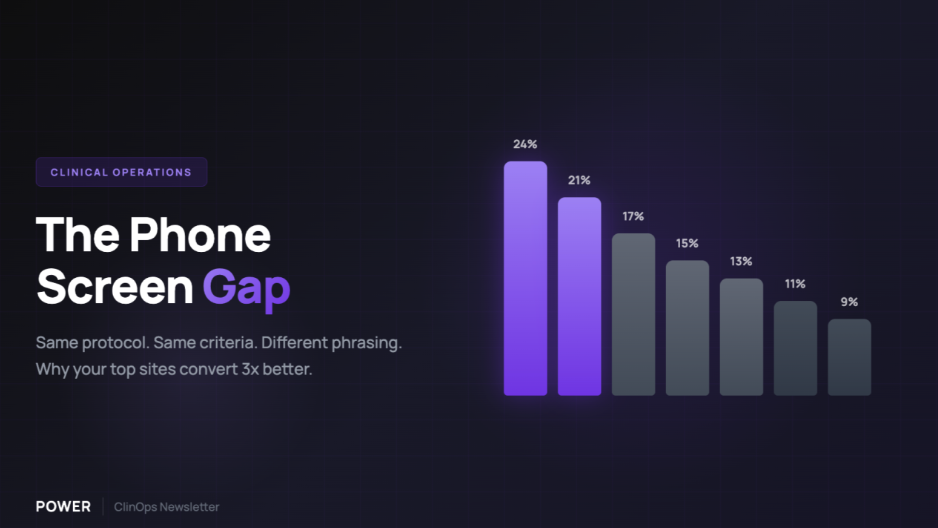

Many sponsors assume their sites are running similar recruitment processes. They're often not.

Across a 50-site study, you may have 50 different approaches to that first patient phone screen. Different questions. Different order. Different objection handling. Different conversion rates.

The problem isn't underperforming sites. It's an "invisible variance."

When Site A (as an example) converts 20% of phone screens and Site B converts 10%, sponsors typically attribute the gap to referral quality or patient demographics. Sometimes that's accurate. Often, it's the screening call itself.

But you can't address what you can't see.

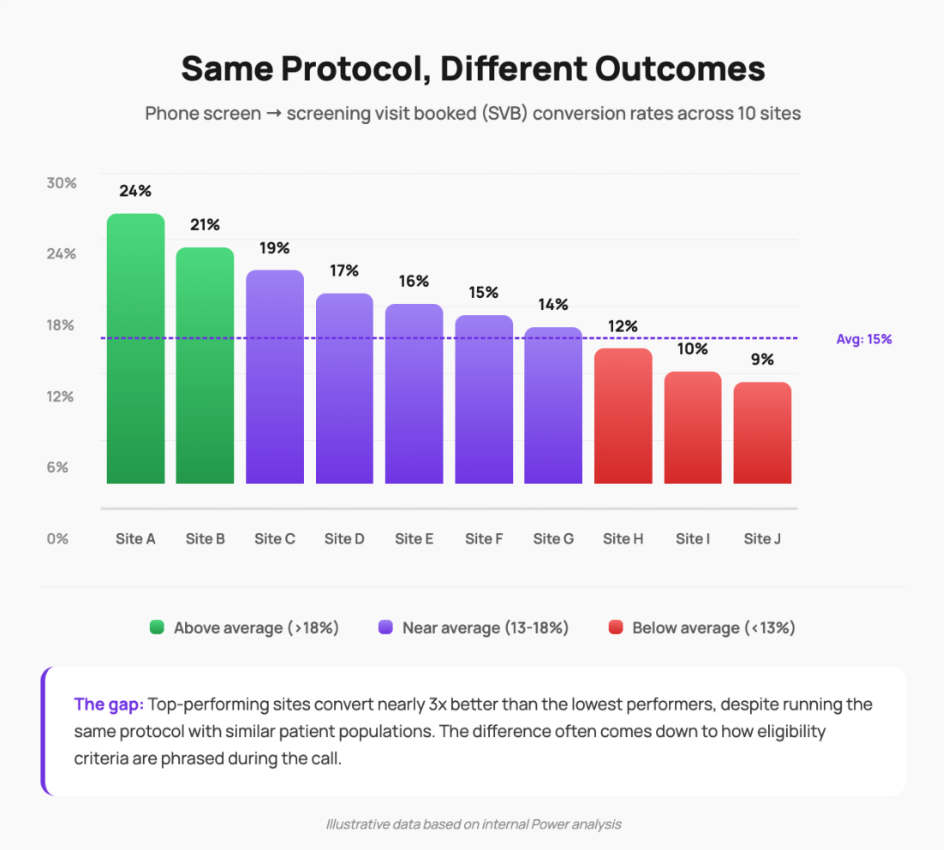

The gap: Top-performing sites convert nearly 3x better than the lowest performers, despite running the same protocol with similar patient populations. The difference often comes down to how eligibility criteria are phrased during the call.

Illustrative data based on internal Power analysis

A recent example from our network:

We ran this analysis for a recruitment program we're supporting. Dozens of sites, same protocol, substantially different phone screen conversion rates.

When we investigated, we found something notable: just 2 criteria in the protocol were driving roughly 80% of the variance in outcomes across sites (internal Power analysis, Q4 2025).

Same questions on paper. Materially different ways of asking them.

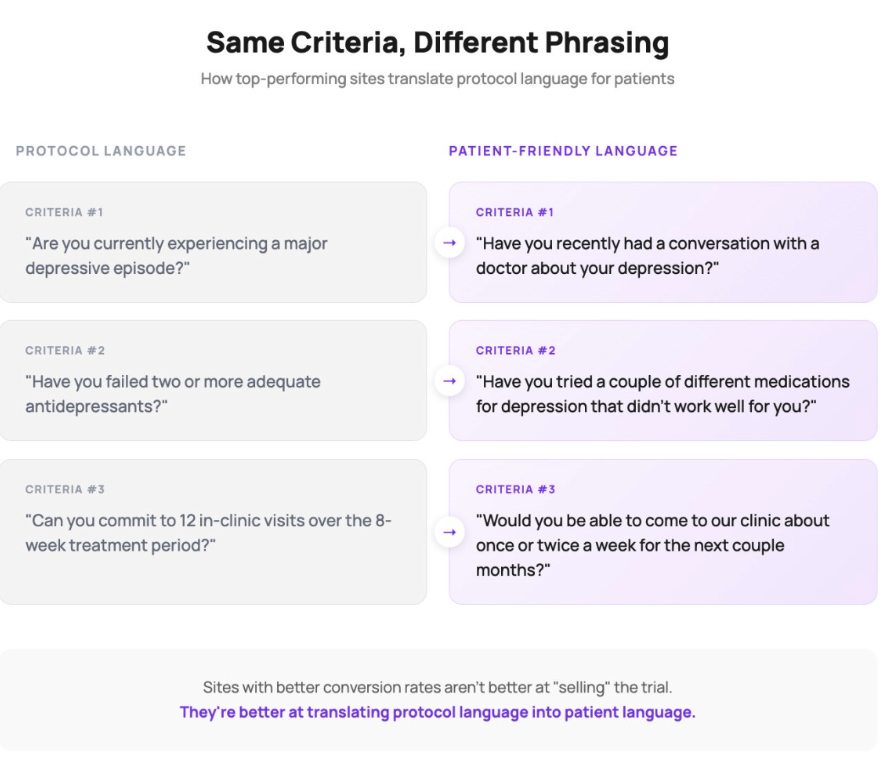

There are many ways to ask about the same medical history. And the way you ask matters considerably, because patients often struggle with clinical framing. One site asks "Are you currently experiencing a major depressive episode?" Another asks "Have you recently had a conversation with a doctor about your depression?" Same intent. Different patient response rates.

The sites with better conversion weren't more effective at "selling" the trial. They were better at translating protocol language into patient language.